Seven Questions to Ask Your AI Vendor

Many AI procurement processes face similar challenges because the buyer is sometimes uncertain about which questions are important to ask. Here we share seven questions that can help you determine whether a vendor truly cares about data protection.

Imagine an agency uploading 200 client contracts to a public AI service to get help with summaries. Three months later, one of the clients asks where their data has gone, and the agency cannot provide an answer. They had never asked about it in the first place.

This happens more often than many may be willing to admit. Smaller companies purchasing AI services often lack sufficient time to conduct thorough technical and legal checks. Moreover, vendors' materials often focus more on how the product works, rather than on what customers need to verify before signing an agreement.

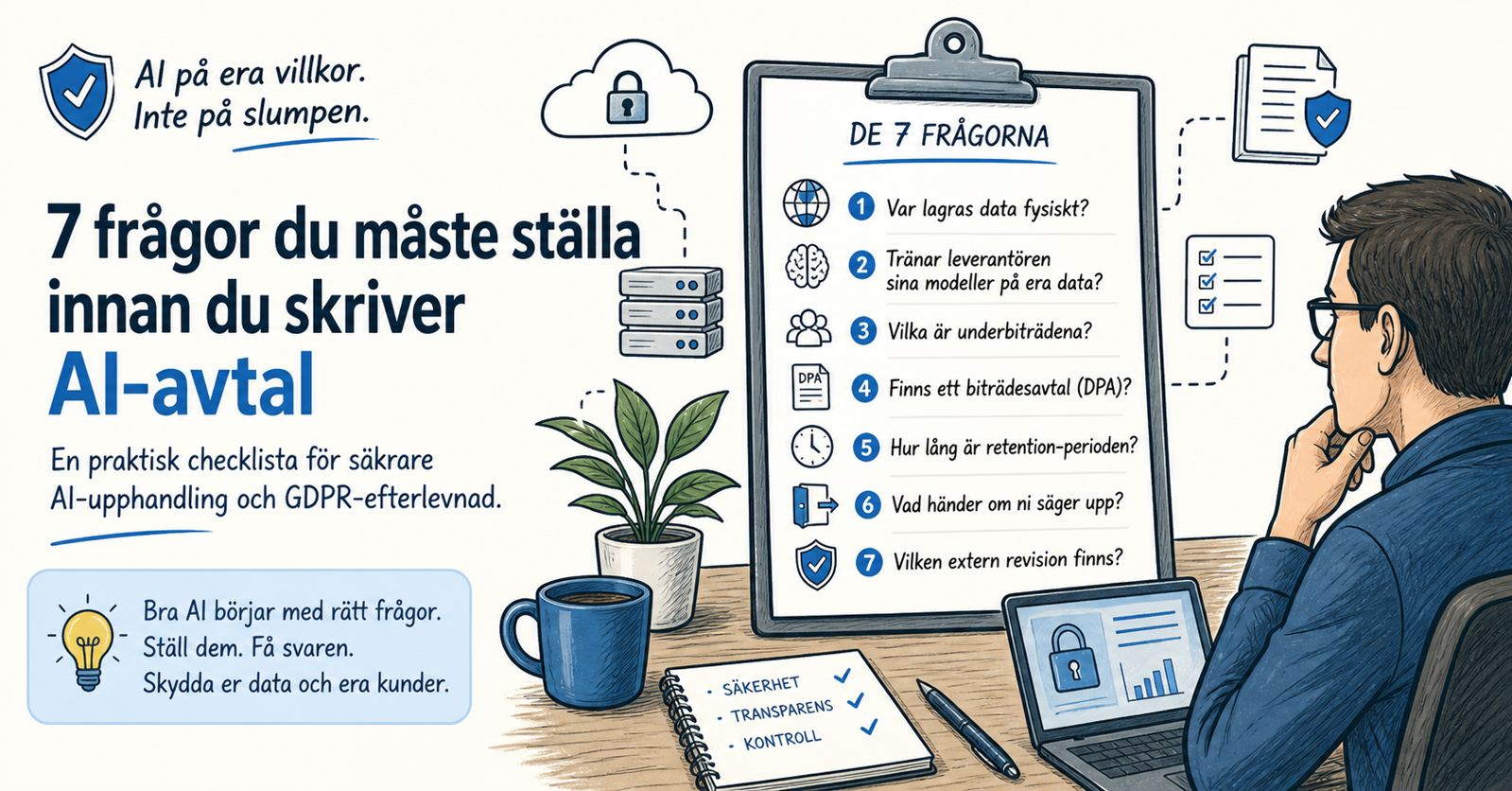

Here are seven questions every company should consider before signing an AI agreement. It's a good starting point for careful review. The text serves as an easy-to-navigate checklist. Remember, this is not legal advice.

Why These Seven Questions

Since EU AI Act entered into force on August 1, 2024, the rules have begun to apply in stages. From February 2025, certain AI methods were prohibited and AI competency requirements were introduced, followed by rules for general-purpose AI models from August 2025. According to the current plan, most of the regulation will come into effect from August 2026. The AI Act works together with GDPR when AI systems process personal data. GDPR Article 28 describes the contract between the data controller and the data processor. The AI Act focuses on risk classification, transparency, and liability for vendors and users of certain AI systems. IMY is the Swedish supervisory authority for GDPR, while responsibility for the AI regulation in Sweden is expected to be shared among several authorities.

In practice, much of the procurement work concerns seven important questions. These questions relate to where data is stored, how it is used, who is allowed to process it, and what happens when the contract ends. Considering this can make the entire process smoother and more secure for everyone involved.

The Seven Questions

1. Where is data stored physically?

A vendor may be based in Sweden but use servers or subcontractors outside the EU, which may make it important to consider the CLOUD Act when assessing third-country transfers and access risks. This also applies when data is stored in another country.

Good answer: Specific country and region, for example "Hostinger, EU (Lithuania)" or "AWS Frankfurt eu-central-1".

Red flag: Only mentioned as "In the cloud" or "in the EU" without further specification.

2. Does the vendor train its models on your data?

Your documents may be used to improve models, stored for longer than you might think, or become difficult to delete in the future. A clear example is: Samsung restricted the use of generative AI internally in 2023 after employees had entered sensitive information into external AI services.

Good answer: Written guarantee in the processing agreement or in the vendor's commercial terms. Anthropic, for example, states for its commercial products that customer inputs and outputs are not used for model training by default. The commitment must be verifiable and have support in the contract.

Red flag: "We have a strict policy" without contractual language or source reference.

3. Who are the sub-processors?

AI stacks often have many dependencies, which can feel complex. Your vendor uses a language model powered by an infrastructure platform, and sometimes these may have their own sub-processors. GDPR requires that the data processor always has clear written authorization before engaging new sub-processors. Furthermore, the customer should be informed about any changes so they have the opportunity to provide feedback or objections.

Good answer: Here is a public list containing names, countries, and purposes. We provide clear information before any changes, often with a 30-day notice for commercial agreements. Microsoft's Service Trust Portal is a good example of how large vendors compile security and compliance information, helping to build trust and transparency.

Red flag: "We use leading sub-processors" without naming them.

4. Is there a Data Processing Agreement (DPA)?

According to GDPR Article 28, it is important to have an agreement or other binding legal instrument in place when someone processes personal data on your behalf. It helps ensure that all processing is done in a safe and correct manner.

Good answer: A public DPA is available on the vendor's website and can be signed without complications. Stripe, Anthropic, and Microsoft use it as well, showing how reliable and straightforward it is to manage.

Red flag: "We'll draw up an example if needed" or terms where it is not clearly stated how the requirements of Article 28 will be met.

5. How long is the retention period?

Make sure you match your own retention policy. A vendor that logs prompts for 12 months can make it slightly more challenging to handle deletion, access, and compliance with GDPR Article 17 when the logs contain personal data.

Good answer: Specific days for each data category. "Prompts: 30 days. Logs: 90 days. Training index: deleted within 24 hours of removal."

Red flag: "As long as necessary."

6. What happens if you terminate?

Termination and personal data handling. According to GDPR Article 28, it is important that the processor, based on the customer's wishes, deletes or returns personal data when the service ends. Additionally, GDPR Article 20 may give registered individuals the right to data portability in certain cases.

Good answer: Our system deletes data within 30 days and we always send you a confirmation email when the deletion is complete. You can choose an export format such as JSON or CSV and specify the time you want for the deletion, for example 30 days.

Red flag: There is no clear answer or any reference to support, which can make it difficult to get the help you need.

7. What external audits exist?

ISO/IEC 27001 and SOC 2 are well-known audit frameworks. Being certified or having a current audit report means that an independent party has conducted a thorough review of security procedures.

Good answer: Current certificates that typically apply for 12 months and the ability to request an audit report under NDA.

Red flag: "We follow industry standards."

When is a Data Protection Impact Assessment (DPIA) Required?

GDPR Article 35 requires a Data Protection Impact Assessment when processing may pose a high risk to individuals' rights and freedoms. IMY describes the impact assessment as an ongoing and carefully documented process.

DPIA is often an important tool when processing involves large-scale profiling, automated decision-making with major legal consequences, or handling of sensitive personal data such as health, religion, or political opinions.

For simpler situations, a DPIA can often be avoided. For example, an AI chatbot for public customer service may be a case where the risks are low, especially if processing is limited, there is human control, and no sensitive data or automated decisions are used. For example, translating without personal data or summarizing public documents is usually less risky.

For simpler cases, a structured workshop with technical and legal expertise in the same room is often sufficient. For more complex situations, you can use CNIL's DPIA template or IMY's own guidance as a good starting point.

What Works Against Good Procurement

A verbal assurance without a written contract is insufficient. Saying "We don't train on your data" during a sales meeting doesn't help if the DPA says something else. Always read through the contract carefully before making a decision.

Generic DPAs that do not address AI-specific risks are another problem. Many SaaS vendors use old templates from before the AI era and have not updated them. It is important to review them before signing.

The sub-processor list is also often overlooked. It should be reviewed with the same care as the main vendor's terms.

Conclusion

The seven questions help make the conversation more concrete and clear. A serious AI vendor regarding data protection should be able to answer clearly and honestly, without complicating matters. If someone is unwilling to answer, it is often a sign that they don't have good answers. We have ourselves published answers to all seven questions – our Data Processing Agreement and our sub-processor list are available for everyone to see. If your current vendor is unwilling to match this or if you need help evaluating multiple options, do not hesitate to send an email to info@gothiaai.se. We are happy to help guide you in the right direction.